Why Banks and Financial Services Networks Need Hardware-Accelerated Data Encryption

April 21, 2026 | Post Quantum Cryptography

There was a time when encryption in financial services sat quietly in the background, an essential safeguard, but not something that shaped the architecture of the network itself. That time has passed – Q-day is inevitable.

Today, encryption is everywhere. It protects transactions, secures internal communications, and underpins trust across an increasingly complex financial ecosystem. But as encryption has expanded, something subtle, and critical, has happened: it has moved from being a protective control to becoming part of the data plane itself.

And yet, much of it is still running on foundations never designed for that role.

This is where the conversation changes.

Why Software-Only Encryption No Longer Fits Financial Services Networks

Software-Only Encryption Was Never Designed as a Primary Enforcement Layer

Most encryption technologies deployed across financial services were built with a different assumption, that encryption would be applied selectively, not universally.

They were designed for moderate traffic volumes, intermittent use, and spare CPU capacity to absorb overhead.

But modern financial environments don’t operate like that anymore.

Encryption is now always on, high volume, and mission-critical. Without much fanfare, encryption has become embedded in the core of the network, handling vast volumes of sensitive data in real time. The challenge is that software-based approaches were never intended to operate as the primary enforcement layer at this scale.

How Financial Services Networks Have Fundamentally Changed

Financial services networks have evolved rapidly, and not always in ways legacy encryption models can comfortably support.

- Explosive growth in encrypted east–west traffic between trading systems, payment rails, and data centres

- Latency-sensitive workloads where predictability matters as much as speed

- Highly meshed architectures spanning on-prem, cloud, and hybrid environments

- Increasing regulatory scrutiny under frameworks like DORA and NIS2

In this environment, encryption is no longer a layer you can simply add. It is woven directly into operational flow.

The Architectural Mismatch This Creates

This shift has exposed a fundamental tension. Software-only encryption competes for the same resources as the applications it is meant to protect.

It draws from shared CPU capacity, meaning performance becomes dependent on workload conditions. Under pressure, whether from peak trading activity, payment spikes, or failover scenarios, encryption behaviour can shift in ways that are difficult to predict or test.

This creates a fragile balance between security, performance, and resilience, one that financial institutions cannot afford to leave to chance. The encryption of financial data cannot become the bottleneck.

Why This Matters Now

The urgency is immediate rather than theoretical.

Regulators increasingly expect firms to demonstrate that security controls behave consistently under stress. Encryption failures, or even performance degradation, can directly affect service availability, customer outcomes, and regulatory standing.

At the same time, preparation for post-quantum cryptography is accelerating. This will significantly increase cryptographic workloads, placing even greater strain on already stretched architectures.

Financial institutions are now being forced to rethink not just what encryption they use, but where and how it is enforced.

Core Encryption Technologies and Practices in Financial Services

Encryption Algorithms Used in Banking

Two core technologies underpin most encryption strategies in financial services.

- Advanced Encryption Standard (AES), widely adopted for protecting sensitive data both at rest and in transit, delivering strong security but becoming computationally demanding at scale when handled purely in software

- RSA Encryption, essential for secure key exchange and digital signatures, forming the backbone of public key infrastructure, but increasingly impactful in terms of performance in highly connected environments

Key Management Practices

Encryption strength is inseparable from how keys are managed. As environments scale, this becomes an operational challenge rather than a purely technical one.

Secure key generation, storage, and distribution must be tightly controlled. Regular rotation is essential to reduce exposure but introduces complexity when thousands of encrypted connections are in play.

Access must also be carefully governed. Multi-factor authentication, role-based permissions, and strict separation of duties are all necessary to maintain auditability and trust.

As networks grow, key management quietly becomes one of the most demanding aspects of encryption strategy.

Endpoint and Database Encryption

Endpoint and database encryption remain critical layers of defence, protecting sensitive data where it resides.

- Securing endpoints such as ATMs, mobile banking applications, and employee devices

- Encrypting databases, including highly sensitive fields like account and card information

However, these protections do not extend into the network itself. Data in motion, constantly moving between systems, remains exposed to the limitations of the underlying encryption architecture.

Encryption for Network Transmission

Encryption in transit is now a standard expectation across financial services.

Transport Layer Security protects customer-facing channels and digital services. IPsec secures private networks, including SD-WAN and SASE architectures. Cloud encryption safeguards data across hybrid and multi-cloud environments.

These technologies are indispensable. Yet when implemented purely in software, they inherit the same challenges of scalability, contention, and unpredictability.

Regulatory and Compliance Considerations

Compliance Requirements for Financial Institutions

Encryption is firmly embedded within regulatory expectations.

Frameworks such as PCI DSS, GDPR, DORA, and NIS2 all reinforce its role as a baseline requirement. But expectations are evolving. Regulators are increasingly concerned with how encryption performs under real-world conditions, not just whether it is present.

Unpredictable behaviour, especially under stress, is no longer acceptable.

Security Frameworks and Standards

Financial institutions must align with established standards while preparing for future change.

- NIST-approved cryptographic algorithms provide a foundation for trusted security

- ISO/IEC 27001 offers guidance on implementing and managing encryption controls

- Cryptographic agility is becoming essential, enabling organisations to adapt as threats and standards evolve

This shift places pressure on existing architectures to be both robust and flexible.

Protecting Customer Personally Identifiable Information (PII)

Protecting PII is one of the most visible and high-stakes applications of encryption.

Sensitive data must be secured to prevent fraud, identity theft, and unauthorised disclosure. It must also be transmitted safely across increasingly complex internal and external networks.

When encryption enforcement falters, even momentarily, the consequences can be immediate, affecting both customer trust and regulatory compliance.

Hardware-Accelerated Encryption as a Foundational Layer for Financial Services

Limitations of Software-Only Encryption Approaches

Software-only encryption was never designed to operate as the primary enforcement layer for financial services networks.

As traffic volumes increase, performance becomes variable. Latency and throughput can degrade under load, sometimes in subtle ways that only emerge during critical moments.

This lack of determinism makes it difficult to test, assure, and confidently demonstrate compliance. Over time, it creates friction between security requirements and operational realities.

Benefits of Hardware-Accelerated Encryption

Hardware-accelerated encryption addresses these challenges by shifting enforcement into a dedicated, purpose-built layer.

- Line-rate encryption and decryption, even under high-volume conditions

- Consistent, deterministic latency regardless of workload

- Support for real-time applications such as payments processing and trading systems

By separating encryption from general-purpose compute, hardware reduces contention and stabilises performance.

It also enhances security. Removing cryptographic enforcement from software stacks reduces the attack surface and strengthens separation between data, control, and management planes.

Looking forward, hardware-based approaches enable smoother adoption of new cryptographic standards, including post-quantum encryption, without requiring fundamental architectural change.

Integration with Software-Based Security Controls

This evolution is not about replacing software, but about redefining its role.

Software continues to handle policy, orchestration, visibility, and monitoring. Hardware provides a stable and predictable enforcement layer beneath it.

Together, they create a more balanced and resilient architecture, one that is easier to manage, test, and trust.

Use Cases in Banking and Financial Services

Hardware-accelerated encryption is already being applied across a range of critical financial services environments.

- Securing high-throughput inter-data-centre traffic without introducing latency risk

- Protecting payment networks and trading platforms where timing is critical

- Supporting private WANs and cross-border operations at scale

- Enabling encryption upgrades driven by regulation without disruptive redesign

Each use case reflects the same underlying need, security that performs consistently under pressure.

Conclusion and Future Outlook

Summary of Key Points

Encryption has become foundational to financial services, underpinning security, resilience, and compliance. Software-based approaches remain essential, but they were never designed to operate as the primary enforcement layer at scale.

Hardware-accelerated encryption provides the missing structural foundation, enabling secure, deterministic, and scalable network performance.

Emerging Trends in Data Encryption for Financial Services

Several trends are shaping the future of encryption in financial services.

- A shift toward deterministic security architectures

- Increasing importance of cryptographic agility

- Preparation for post-quantum cryptography without disrupting existing infrastructure

These trends point toward a more integrated, architecture-driven approach to security.

Final Thoughts

Encryption decisions are increasingly architectural decisions.

Financial institutions need security controls that scale operationally, behave predictably under pressure, and adapt to future demands. Hardware-accelerated encryption enables this shift, strengthening existing controls while providing the stability modern networks require.

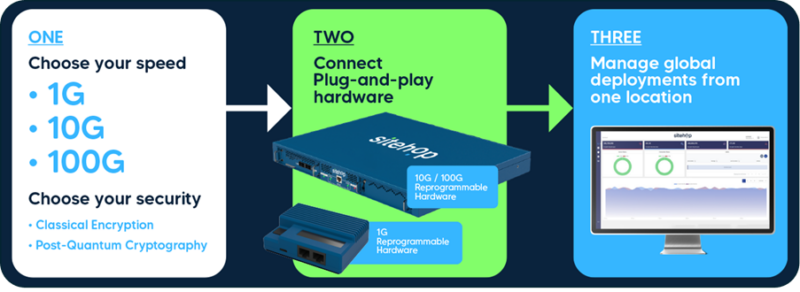

Future-proof your encryption.

Discover how Sitehop can help you build secure, deterministic, and ready-for-anything financial networks.

Request a demo if you’d like to see our platform in action.

Or stay in touch with Sitehop’s latest thinking, subscribe to our PQC Bulletin.

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

Google Just Set the Clock on Cybersecurity’s Biggest Ever Upgrade

April 1, 2026 | Post Quantum Cryptography

By now, most executives have heard some version of the quantum warning.

Quantum computers are coming. Encryption will break. Q-Day is inevitable.

At this point, it risks sounding like the cybersecurity equivalent of ‘eat your vegetables’: widely accepted, vaguely important, and very easy to ignore.

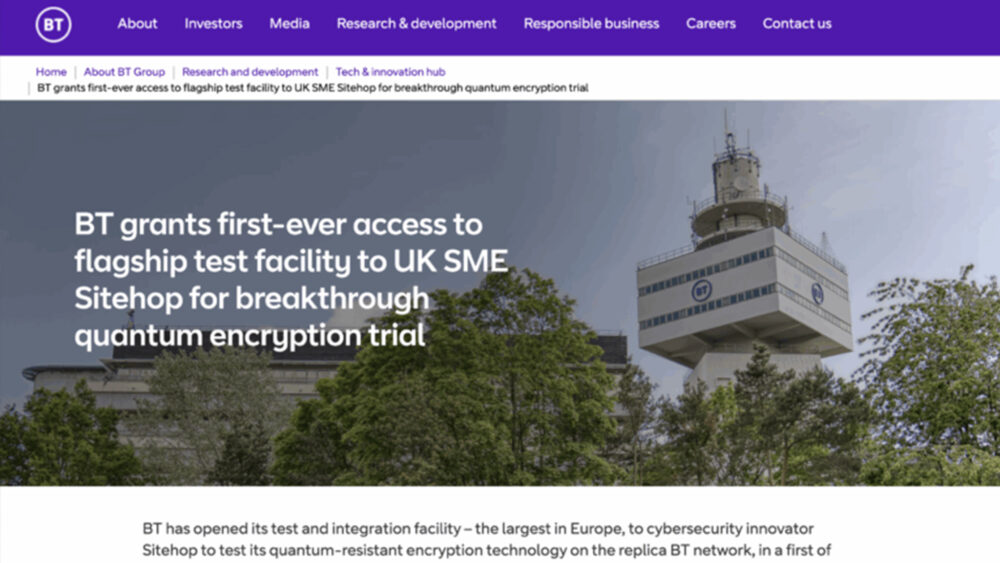

Google’s recent announcement changes that dynamic.

By setting a 2029 timeline for post-quantum cryptography migration, it has shifted the conversation from abstract theory to something far less comfortable: a deadline.

This is not a prediction of when quantum computers will break encryption. It is more practical, and more urgent. It is a signal that organisations should assume the window to act is closing, and plan accordingly.

From Theory to Timeline

The reason this matters is simple. For years, quantum risk has been discussed as a future possibility. Now it is being framed as a present planning problem.

The drivers are well understood:

- Improvements in quantum hardware, particularly error correction

- Rapid reductions in the estimated cost of breaking RSA

- Increasing confidence that large-scale systems are achievable

At the same time, the threat is already active in a quieter form. Sensitive data is being collected today with the expectation that it can be decrypted later, a tactic known as ‘harvest now, decrypt later’.

Which leads to an uncomfortable but important point:

The breach has, in many cases, already happened.

We are simply waiting for the decryption.

The Part Everyone Is Avoiding

If there is one reason the conversation keeps circling back to theory, it is this: the real problem is inconvenient. It is not about choosing a new algorithm. It is about replacing cryptography everywhere, and ‘everywhere’ is doing a lot of work here.

Cryptography sits inside applications and APIs, devices and firmware, networks and data flows, supply chains and third-party services, and legacy systems that nobody wants to touch.

Most organisations do not have a complete map of where it is used; some would struggle to produce even a partial one. Which makes the idea of a clean, orderly migration somewhat optimistic.

Why Waiting Feels Easier, and Why It Isn’t

There is a natural temptation to delay.

After all: The standards are new; The timelines are uncertain; The problem feels distant.

And, if we are honest, the industry has a long tradition of discussing quantum risk without doing very much about it.

But procrastination is not neutral. It creates two very practical risks:

- More data at risk

Every year adds to the volume of information that could be decrypted later - Less time to fix it properly

Migration windows shrink, increasing the likelihood of rushed, disruptive implementations

Put differently: waiting does not reduce uncertainty. It simply reduces options.

The Legal Grey Area, and Why It Matters

There is a common assumption that quantum risk is too early to carry real liability. That assumption may not hold. Under frameworks such as GDPR, organisations are required to implement ‘appropriate technical and organisational measures’, often interpreted as maintaining ‘state of the art’ security.

In practice, this means:

- Using current, proven protections

- Regularly reviewing and updating controls

- Responding to known, credible threats

‘State of the art’ is not fixed. It evolves.

If post-quantum cryptography is available, standardised, and increasingly adopted, and organisations choose not to act, the question becomes uncomfortable:

Is inaction still defensible?

There is, as yet, no case law defining liability in a post-quantum breach scenario. But that may offer little reassurance. Because the organisations that test that boundary will do so after the breach, not before it.

The Conversation Few Boards Want to Have

There is another, less technical barrier. Many organisations struggle to take this issue to the board in clear terms. Because the honest version sounds like this:

‘At some point in the future, the cryptography that underpins everything we do may stop working.’

That is not an easy message to deliver. Nor is it an easy one for boards to act on.So the issue is often softened:

- Framed as a long-term risk

- Delegated to technical teams

- Or deferred for ‘further monitoring’

But the combination of clearer timelines and increasing industry movement makes that position harder to sustain. This is no longer just a technical issue. It is a governance decision

The Problem Is Not Cryptography, It Is Infrastructure

Much of the current discussion focuses on post-quantum algorithms. This is necessary, but it misses the point.

The real challenge is operational.

Replacing cryptography across global infrastructure is not a patch. It is a transformation. And most organisations are not structured to do it quickly, safely, or at all without significant disruption.

Google’s emphasis on ‘crypto agility’, and its integration of post-quantum standards into platforms such as Android, reflects this reality. Security must be designed to evolve, not replaced wholesale.

This is where the gap lies.

What Organisations Should Actually Do

If the conversation is to move beyond theory, it needs to become practical.

The instinct is often to start with multi-year crypto audits. That instinct is understandable, but increasingly flawed. By the time you have perfectly mapped where cryptography sits, the timeline to safely replace it may already have closed. The priority is not perfect visibility. It is controlled remediation at pace.

Three priorities stand out:

- Move from discovery to action

- Assume RSA and ECC are widely embedded

- Prioritise high-risk, high-value systems first

- Begin upgrading now, not after full audits

This is not about understanding everything. It is about reducing exposure quickly.

- Build the ability to adapt

- Avoid hard-coded cryptography

- Enable updates without replacing entire systems

- Design for flexibility

- Think beyond the algorithm

- Treat cryptography as infrastructure

- Plan for continuous change, not one-off migration

- Focus on deployment, not just selection

None of this is particularly glamorous. But it is where the real work lies.

A More Practical Perspective

At Sitehop, we view the quantum transition less as a cryptographic problem and more as an infrastructure one.

The organisations that succeed will not be those that simply adopt post-quantum algorithms. They will be those that can: Deploy changes at scale; Update security dynamically; Maintain performance and uptime. In short, those that can evolve without disruption.

This requires architectures where cryptographic functions can be updated at line speed across networks, rather than replaced system by system.

The Clock Is Now Visible

Google’s timeline does not guarantee when Q-Day will arrive. But it does something arguably more important: it makes inaction harder to justify.

The industry has moved from ‘Quantum is coming’ to ‘You should already be preparing’

The next phase will be less forgiving. Because this is not just another upgrade cycle. It is a reset of the trust mechanisms that underpin the digital economy.

The longer organisations remain in the realm of theory, the more likely they are to encounter the problem in practice. And by that point, it will no longer be a discussion about timelines. It will be a discussion about accountability.

Request a demo if you’d like to see our platform in action.

Or stay in touch with Sitehop’s latest thinking, subscribe to our PQC Bulletin.

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

Harvest Now, Decrypt Later: Why Financial Institutions Cannot Afford to Wait on Post-Quantum Security

March 9, 2026 | Post Quantum Cryptography, Financial Services

Encrypted does not mean safe. Not anymore.

Across global financial markets, vast volumes of encrypted data move every second, transactions, pricing feeds, trading instructions, interbank messages, customer records. It is wrapped in strong cryptography and trusted protocols. It passes audits. It meets today’s standards.

But some of that data is already being copied.

Stored.

And kept for a future moment when it can be decrypted.

This is the reality of harvest now, decrypt later, and for financial institutions, the clock is already ticking.

Understanding ‘Harvest Now, Decrypt Later’ (HNDL)

Definition and Concept

Harvest Now, Decrypt Later (HNDL) is a strategic cyber tactic. Adversaries intercept and store encrypted data today, with the expectation that future advances in cryptanalysis, particularly quantum computing, will allow them to decrypt it.

Nothing breaks immediately. No alarms fire. No ransomware note appears.

Instead, it is a time-shifted breach.

Encryption can appear robust today while already failing long-term confidentiality requirements. Data that must remain secret for 10, 20, or even 30 years may already be compromised in waiting.

For financial institutions, that matters deeply. Transaction histories, structured products, customer records, regulatory archives, trading algorithms, these are not short-lived assets. Their value, and their sensitivity, extends far beyond current infrastructure refresh cycles.

HNDL exploits that gap between system lifetime and data lifetime.

Key Threat Actors and Motivations

This is not opportunistic cybercrime.

HNDL is associated with nation-state actors, advanced persistent threat (APT) groups, and other well-resourced adversaries operating with long-term strategic objectives.

Their motivations are rarely immediate financial theft. Instead, they include:

• Strategic intelligence gathering

• Economic and competitive advantage

• Geopolitical leverage

• Long-term influence over markets and critical infrastructure

Encrypted financial data, transaction flows, liquidity movements, proprietary pricing models, these offer systemic insight. Few sectors concentrate such long-lived, high-value information as densely as banking and capital markets.

Financial institutions are uniquely attractive targets precisely because their data endures.

The Role of Quantum Computing in Harvest Now, Decrypt Later

Quantum computing is the enabling factor behind the HNDL model.

There is broad consensus that cryptographically relevant quantum computers do not exist at scale today. However, credible projections suggest they are plausible within the next 10–15 years.

That uncertainty does not reduce risk, it amplifies it.

Data harvested today can simply wait. The collection phase and the decryption phase are separated by time. Adversaries do not need quantum capability now. They only need storage, patience, and belief in future breakthroughs.

Institutions holding long-lived financial data cannot rely on a ‘wait and see’ approach. By the time quantum decryption becomes feasible, the damage may already be baked in.

For deeper background on evolving standards, see NIST guidance on post-quantum cryptography.

Strategic Risks of Harvest Now, Decrypt Later for Financial Institutions

Vulnerable Data Types and Long-Term Risks

The financial sector retains data longer than most industries, often for regulatory, contractual, or operational reasons.

At risk are:

• Financial transaction records and customer histories

• Trading strategies and proprietary analytics

• Pricing models and algorithmic execution logic

• Interbank communications and market infrastructure traffic

• Encrypted backups and archives retained for decades

Some of this data underpins competitive advantage. Some underpins systemic trust. Some underpins legal compliance.

All of it may outlive today’s cryptographic assumptions.

Exposure Windows and Confidentiality Lifetimes

The window between interception and decryption may span years.

But confidentiality lifetimes often span longer.

Government and industry guidance already assumes that post-quantum migration will take many years. Data encrypted in 2026 may need to remain secure well into the late 2030s and beyond.

The risk emerges when organisations design security around system refresh cycles rather than data value duration.

A trading platform may be replaced in five years.

The transaction data it generates may need to remain confidential for twenty.

HNDL exploits that asymmetry.

Threat Models and Attack Vectors

Harvesting does not require breaking encryption.

It requires access to encrypted traffic, including:

• WAN and inter-data centre links

• Cloud connectivity paths

• East–west traffic within modern financial networks

• Long-haul backbone connections

Even trusted, compliant, and audited encrypted channels can be silently copied. Ciphertext can be stored at scale. Modern storage economics make retention trivial.

The absence of visible compromise does not mean the absence of exposure.

A Known Practice That Is Accelerating

Public reporting and historical disclosures have shown that intelligence agencies and sophisticated threat actors collect and retain large volumes of encrypted communications as part of long-term exploitation strategies.

Security researchers increasingly recognise harvest now, decrypt later as a logical extension of these long-standing practices, one that is accelerating as awareness of quantum computing advances spreads.

The model is simple: collect everything now. Decrypt when ready.

For institutions that assume encrypted equals secure indefinitely, that assumption no longer holds.

Preparing for the HNDL Threat: Post-Quantum Cryptography

Why Post-Quantum Security Is a Board-Level Responsibility

HNDL is not a narrow technical issue.

It is a long-term risk to institutional trust, competitiveness, and resilience.

Boards are accountable not only for today’s performance, but for safeguarding sensitive financial data beyond current leadership and technology cycles. Delayed action increases the likelihood of disruptive, forced migrations later, under regulatory pressure or threat escalation.

Regulatory frameworks such as DORA and NIS2 reinforce expectations around resilience, security by design, and the use of ‘state of the art’ cryptography.

For financial institutions holding long-lived data, the relevant risk window is already open, regardless of the exact timing of Q-day.

Migration Planning and Readiness

Post-quantum transitions will take years, not months.

NIST finalised its first post-quantum cryptography standards in 2024 to allow organisations time to prepare before large-scale decryption becomes feasible.

Preparation requires:

• Identifying systems and network paths with long upgrade cycles

• Prioritising backbone and data-in-motion encryption with extended confidentiality requirements

• Mapping cryptographic dependencies across hybrid and multi-cloud environments

Reactive migration under future pressure will be more expensive, more complex, and more disruptive.

Strategic migration is measured and deliberate.

Building Quantum Resilience

Adopting post-quantum algorithms is necessary, but not sufficient.

True resilience requires:

• Crypto-agile architectures that allow algorithms to evolve in place

• The ability to upgrade without wholesale infrastructure replacement

• Reduced HNDL exposure windows through forward-looking design

• Encryption platforms that maintain performance, determinism, and scalability

In financial markets, security cannot come at the expense of latency predictability or throughput. Protection and performance must coexist.

This is where infrastructure matters.

Sitehop’s PQC solutions are designed to deliver hardware-enforced, crypto-agile transport that strengthens encryption without compromising determinism or energy efficiency, helping financial institutions future-proof their critical network paths.

Monitoring and Mitigating HNDL Risks

Cryptographic longevity must be treated as an ongoing risk management discipline.

That includes:

• Tracking standards development and regulatory expectations

• Monitoring adversary capability evolution

• Embedding post-quantum readiness into broader resilience strategies

This is not a one-off project. It is a sustained programme aligned to long-term data protection horizons.

The Role of Encryption Algorithms and Cryptanalysis

RSA and elliptic curve cryptography underpin much of today’s secure communications. In a post-quantum context, they are vulnerable to sufficiently powerful quantum attacks.

However, algorithm strength alone does not eliminate HNDL risk.

Deploying post-quantum algorithms within architectures that cannot scale, adapt, or maintain performance simply shifts the problem elsewhere.

Financial institutions need encryption platforms designed for long-term evolution, not static point upgrades.

From Awareness to Action for Financial Institutions

Why Waiting Increases Long-Term Risk

Every day, more encrypted data accumulates.

Every quarter of delay narrows architectural options.

Every year without preparation increases future remediation cost.

HNDL risk compounds quietly. It does not announce itself.

By the time decryption becomes feasible, the opportunity to prevent exposure may already have passed.

What Financial Institutions Should Be Doing Now

Practical steps begin today:

• Map confidentiality lifetimes across financial data categories

• Assess network and encryption architectures for crypto agility

• Identify backbone and interconnect paths with extended secrecy requirements

• Embed post-quantum readiness into long-term infrastructure planning

• Align security, performance, and resilience objectives rather than trading them off

This is not about panic.

It is about prudence.

Financial institutions exist on trust, trust that money, markets, and data are protected not just today, but tomorrow.

Harvest now, decrypt later challenges that assumption.

The answer is not to wait for quantum certainty.

It is to future-proof your encryption now.

Ready to reduce your HNDL exposure and build quantum-resilient infrastructure?

Explore Sitehop’s approach and request a demo today.

To find out more, email info@sitehop.com

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

AI Is Scaling Faster Than Our Data Security Assumptions

February 23, 2026 | Post Quantum Cryptography, Encryption

As AI Scales, can Data Security Keep Pace?

A recent AI macro deck from Andreessen & Horowitz has been circulating widely among security and technology leaders. It is a strong piece of work, particularly in how it frames the economics of AI, the scale of hyperscaler investment, and where long-term value is likely to accrue.

What stood out most was not just what the deck stated directly, but what it quietly assumed.

As AI adoption accelerates, there is an implicit belief that the underlying infrastructure will scale alongside it. Especially when it comes to data movement and encryption, many assume this layer will simply keep up. In regulated and high assurance environments, the assumptions underpinning AI and data security at scale are already being tested

AI Changes How Data Moves, Not Just How It Is Processed

One of the clearest signals in the deck is the sheer scale of what is coming. Trillions in AI driven revenue, unprecedented infrastructure spend, and AI embedded across everyday workflows rather than isolated use cases.

What follows from this is often under discussed. AI dramatically increases data in motion.

Not just traffic between users and systems, but continuous machine to machine flows, an increasingly AI driven data in motion pattern. Data moving between data centres, across sovereign and regulatory boundaries, from edge to core to cloud, and between autonomous services acting on each other’s behalf. In many environments, these flows are persistent, high-volume, and increasingly latency sensitive.

This shift matters because most security models were designed for a very different world, one where data movement was more bounded, more predictable, and easier to contain

Where the Friction Starts to Show

In highly regulated sectors such as banking, financial services, government, and critical infrastructure, teams are already feeling the pressure created by AI driven data in motion. These are environments where performance, availability, and security are tightly coupled, and where failures tend to be systemic rather than isolated.

Latency becomes a hard constraint. Encryption overhead is quietly traded off for performance often in ways that are difficult to see from a policy or audit perspective. Compensating controls are layered on top of fragile assumptions. Gaps open up between compliance intent and operational reality.

This does not mean teams are failing at security. It means the system boundaries have moved, and security controls are now operating in places and at scales they were never originally designed for.

As AI scales, the transport layer becomes a critical part of the risk surface. It is also a layer where existing security stacks are being stretched beyond what they were originally designed to handle. For many organisations, this is unfamiliar territory, a layer that was previously assumed to be stable, invisible, or someone else’s problem.

Infrastructure Is Where Durability Lives

One of the strongest themes in the deck is that AI winners will not all be visible applications. A disproportionate amount of long-term value will sit in infrastructure. The layers that are hard to replace, deeply embedded, and quietly assumed.

This is especially true in environments where performance is non-negotiable, compliance is continuous, and failures are systemic rather than local.

In those contexts, encryption cannot be a performance bottleneck, an operational burden, or a future cryptographic risk. It must operate at line rate, behave deterministically, and remain manageable without becoming another system teams need to fight.

Why This Matters Now for Regulated Industries

For sectors like banking, financial services, and insurance, this is not a future concern. It is already present in payments and clearing infrastructure, trading and market data environments, interconnects between regulated entities, and early sovereign and private AI deployments. These environments already operate at scale, under tight latency and availability constraints, and with little tolerance for unpredictable behaviour.

As AI increases both the volume and value of data in motion, the cost of getting this layer wrong compounds quickly, across performance, resilience, and compliance.

If encryption becomes the bottleneck, AI return on investment collapses.

A Closing Thought

The deck making the rounds does an excellent job of explaining why AI investment is accelerating and where economic value is likely to concentrate.

The next question for security and technology leaders is simpler, and harder.

Are the assumptions we are making about data in motion still valid at AI scale?

It is not a question with a single answer. But it is one worth asking early, before performance, security, and compliance start pulling in different directions.

Take a demo of Sitehop SAFE Series today!

To find out more, email info@sitehop.com

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

About Melissa Chambers – CEO & Co-Founder, Sitehop

Melissa is the CEO & Co-Founder of Sitehop, and leads the development and scaling of high-speed, post-quantum encryption hardware and securing data-in-motion without compromising performance. With a background in hardware engineering, Melissa specialises in designing, manufacturing, and scaling deep tech products from concept to market.

Passionate about solving complex problems through engineering and advocating for women in STEM, Melissa has seen firsthand the impact of diverse technological perspectives. A proud member of Cyber Runway Ignite and the Women’s Engineering Society, Melissa was honored to be recognised as Startup Magazine’s 2023 Inspirational Woman in Industry and 2024’s Most Inspiring Woman in Cyber, as well as leading Sitehop’s win in the recent ClimbUK Awards with Cyber Security Innovation of the Year 2025.

Cryptographic Agility: An Immediate Risk for Financial Institutions

February 10, 2026 | Post Quantum Cryptography, Financial Services

For years, encryption has been treated as a box to tick. If traffic is encrypted, data is protected, and the audit passes. Or so the assumption goes. But that assumption no longer holds in financial services.

Cryptographic standards are evolving faster than procurement cycles, faster than infrastructure refreshes, and faster than most regulatory frameworks explicitly acknowledge. The result is a growing, largely invisible risk: encryption that is still running, still compliant on paper, but no longer fit for purpose.

This is where cryptographic agility becomes critical. Not as a future upgrade tied to quantum computing, but as an immediate operational requirement for financial institutions that need to maintain security, performance, and regulatory confidence at scale.

Understanding Cryptographic Agility in Financial Services

Regulators may not always use the term cryptographic agility, but their expectations are clear. Frameworks such as DORA and NIS2 place increasing emphasis on operational resilience, adaptability, and demonstrable control across ICT systems. Including encryption.

In practice, this means institutions are expected not only to encrypt data, but to prove they can adapt cryptographic controls over time, without introducing unacceptable operational or systemic risk. Cryptographic agility is best understood in this regulatory context, not as an abstract cryptographic concept.

What Cryptographic Agility (Crypto-Agility) Really Means for Financial Institutions

Cryptographic agility, often shortened to crypto-agility, is the ability to change cryptographic algorithms, parameters, and implementations without replacing, re-architecting, or disrupting underlying infrastructure.

This matters acutely in financial services. Data often has long retention periods. Networks are complex, interconnected, and highly regulated. Infrastructure lifecycles run for years, not months. In this environment, the difference between having encryption and being cryptographically agile is material.

An institution can be fully encrypted and still unable to respond safely or quickly when algorithms are deprecated, parameters need to change, or new standards are introduced. Crypto-agility supports regulatory requirements around long-lived data, evolving standards, and multi-year compliance horizons.

Increasingly, audits are assessing the ability to change cryptography, not just the presence of encryption. This is especially relevant for regulated data flows between institutions, third parties, market infrastructure, and regulators themselves.

Why Encryption Alone Is No Longer Sufficient in Banking and Finance

Many financial services environments run encryption that is technically present but operationally obsolete. Algorithms continue to function, traffic remains encrypted, and controls appear compliant — until standards move on.

This creates the risk of silent cryptographic failure. Nothing visibly breaks. No alarms trigger. But the cryptography no longer provides the level of assurance regulators, customers, or counterparties expect.

Audits and compliance checks often fail to detect this early because they focus on whether encryption exists, not whether it can evolve. As standards change, institutions can find themselves exposed despite appearing secure.

Under regulations such as DORA, which emphasise continuous ICT risk management, this gap matters. Environments can be encrypted but not crypto-agile, leaving institutions vulnerable as expectations evolve.

Key Drivers for Cryptographic Agility in Financial Services

Several forces are accelerating the need for cryptographic agility:

- Faster deprecation of cryptographic algorithms

- Post-quantum cryptography timelines that do not align with financial services procurement and refresh cycles

- Growing interconnection between on-premises, cloud, and third-party networks

At the same time, regulatory pressure is increasing. DORA, NIS2, and guidance from bodies such as ESMA all emphasise future-ready controls for data in motion, third-party risk, and secure machine-to-machine communications. The expectation is no longer static compliance, but demonstrable adaptability.

Common Barriers to Cryptographic Agility in Financial Environments

Despite this, many institutions struggle to implement crypto-agility in practice.

Legacy network infrastructure often has hard-coded cryptography. Software-based encryption is constrained by CPU, latency, and maintenance windows. Making cryptographic changes in live trading or payments environments introduces unacceptable operational risk.

Organisationally, security policy is often separated from network operations. Regulatory change can outpace internal procurement, certification, and infrastructure refresh cycles. The result is encryption architectures that increase risk precisely when cryptographic change is required to maintain compliance.

Implementing Cryptographic Agility Without Disrupting Financial Operations

In financial services, regulatory compliance cannot come at the expense of latency, uptime, or operational stability. Crypto-agility, therefore, has to be treated as an architectural requirement, not an operational afterthought.

The challenge is enabling cryptographic change without disruptive maintenance windows or widespread reconfiguration.

Designing Agility in to Financial Network Architectures

Crypto-agility is most effective when designed into the network layer itself. Modular cryptographic design allows algorithms and parameters to be updated independently of applications and endpoints.

By decoupling cryptography from software stacks, institutions can enforce consistent controls across environments while aligning with established financial services operating models.

Why Software-Led Crypto-Agility Struggles at Financial Scale

Software-based encryption struggles to deliver crypto-agility at scale. CPU-bound encryption introduces performance limits in high-throughput environments. Latency and jitter affect trading, payments, and inter-data-centre traffic.

Cryptographic changes in software stacks have a large operational blast radius, increasing the risk of outages or unintended side effects — risks most financial institutions are unwilling to accept.

How Hardware-Enforced Encryption Enables Crypto-Agile Networks

Hardware-enforced cryptography changes this equation. By delivering deterministic performance, it removes the trade-off between security and speed.

Hardware also simplifies cryptographic transitions. Algorithms can be updated centrally, with minimal operational impact, while improving resilience, segregation of duties, and auditability — all critical requirements in regulated financial environments.

Preparing for Post-Quantum Cryptography in Financial Services

Post-quantum cryptography is not a single upgrade event. It is a transition that will unfold over years, involving hybrid classical and PQC approaches.

Crypto-agile architectures allow institutions to introduce PQC incrementally, without forklift upgrades, as standards evolve and regulatory guidance matures.

Governance, Key Management, and Lifecycle Control

Crypto-agility also depends on governance. Managing keys, certificates, and algorithms across large financial estates requires centralised control and clear lifecycle management.

Regulatory frameworks increasingly expect this visibility. DORA and NIS2 elevate evidence generation for audits, incident reviews, and third-party assurance. Crypto-agile architectures simplify regulator access, reporting, and long-term compliance management.

Building a Practical Roadmap for Cryptographic Agility in Financial Services

Effective roadmaps align cryptographic change with regulatory timelines, not just technology refresh cycles.

Assessing Current Cryptographic Risk in Financial Networks

This starts with identifying where encryption is static or tightly coupled to infrastructure, and mapping algorithm exposure across network paths and data flows.

Phased Adoption of Cryptographic Agility

Institutions can reduce risk by introducing agility at critical network boundaries first, separating cryptographic change from application change.

Moving From Policy to Enforced Cryptographic Control

Crypto-agility cannot live solely in policy documents. It must be embedded into the network fabric itself, ensuring security keeps pace with financial services innovation. There’s some more good reading here from FS-ISAC: FS-ISAC’s Guide to Building Cryptographic Agility in the Financial Sector.

Cryptographic Agility as a Core Control for Crypto-Agile Financial Services

Cryptographic agility is an immediate operational requirement in Financial Services, Banking and Insurance, not a future upgrade tied to quantum timelines. Under DORA and NIS2, the risk is no longer unencrypted data, but encryption that cannot evolve fast enough.

The most exposed institutions are not those without encryption, but those running infrastructure that is encrypted yet obsolete. Crypto-agility must move from policy and roadmaps into enforced, infrastructure-level control, particularly at the network layer.

Aligning security, performance, compliance, and long-term resilience is no longer optional. Future-proof your encryption security today, book a demo of Sitehop and see how crypto-agile networks are built for financial services. Or visit this page for more information.

To find out more, email info@sitehop.com

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

When Encryption of Financial Data Becomes the Bottleneck

January 26, 2026 | Post Quantum Cryptography, Encryption

Encryption is no longer optional in financial services. It is embedded into regulatory expectations, contractual obligations, and customer trust. Every transaction, API call, backup, and interbank connection is expected to be encrypted by default.

Yet as financial networks scale, many organisations are discovering an uncomfortable truth: encryption of financial data, while essential, is quietly becoming a bottleneck. Not because the cryptography is weak, but because the way encryption is implemented across modern financial networks was never designed for sustained scale, volatility, or real-time resilience.

For security leaders, this creates a growing gap between compliant and operationally sound. Encryption meets the letter of regulation, but undermines performance, visibility, and risk management in practice.

Encrypting Financial Data at Scale

Regulation has turned encryption into a baseline requirement across the financial ecosystem. Data must be protected in transit between banks, across cloud environments, and through complex third-party supply chains. As a result, encryption now touches almost every packet moving through financial networks.

This shift matters because scale is not accidental. It is the direct outcome of regulatory mandates, cloud adoption, and open banking architectures.

Where Encryption of Financial Data Breaks Down

In isolation, encryption often performs well. Individual links look healthy. Benchmarks pass. Problems emerge only when encryption is exposed to sustained transaction volumes and market volatility.

At peak load, financial networks start to show symptoms that are easy to misdiagnose:

- Latency and jitter appear only during busy trading windows or payment spikes

- Throughput ceilings emerge under stress rather than in testing

- Packet loss increases subtly, often below alert thresholds

Crucially, these issues are frequently blamed on applications or underlying networks. The encryption layer is assumed to be neutral. In reality, it is often the limiting factor.

Compliance-Driven Encryption vs Operational Reality

Data encryption for banks and financial services has historically been optimised for auditability. Can it be proven that data is encrypted? Are keys managed correctly? Are controls documented?

What is rarely examined is how encryption behaves at runtime.

Frameworks such as DORA and NIS2 emphasise both security and operational resilience. However, they do not explicitly account for encryption-induced performance degradation or loss of network observability. This creates a gap between “audit-ready” encryption and systems that remain resilient under real financial workloads.

The result is unseen performance debt: systems that are compliant on paper, but fragile in practice.

The Hidden Economic and Network Cost

Traditional encryption architectures rarely fail loudly. Instead, they drive cost.

As encrypted traffic scales, financial institutions respond by:

- Overprovisioning bandwidth to compensate for encryption-induced latency

- Deploying additional appliances to maintain throughput

- Adding security tooling to compensate for encrypted blind spots

Encryption efficiency in finance declines as complexity grows. More capacity and more tooling are used to mask architectural penalties rather than remove them.

Hardware-enforced, line-rate encryption changes this equation. Instead of compensating for encryption overhead, it eliminates it by design. Rising infrastructure and tooling costs are not inevitable; they are the result of architectural choices driven by compliance pressure rather than network efficiency.

Core Encryption Practices and Their Limits

Regulators are clear about intent: encrypt everything. The challenge lies in how that intent is realised architecturally.

Cryptography is Rarely the Limiting Factor

In most financial environments, cryptographic strength is not the constraint. Mature algorithms are well understood, trusted, and broadly standardised across the industry.

The dominant constraint is where and how encryption is implemented:

- Inline on firewalls already performing multiple functions

- Embedded into SD-WAN platforms not designed for deterministic performance

- Terminated and re-established multiple times across east–west traffic flows

Architectural placement has a greater impact on performance and resilience than cryptographic choice.

Key Management and Access Control at Scale

As encrypted east–west traffic grows, key management complexity increases non-linearly. Rotation schedules, lifecycle governance, and access controls become operationally heavy.

Over time:

- Key management systems themselves become bottlenecks

- Rotation failures create outage risk

- Operational overhead grows faster than traffic volume

Strong IAM and authentication are table stakes. What changes with scale is visibility. Once traffic is encrypted, behavioural and traffic-level insight is reduced, even as compliance requirements for governance and auditability increase.

This is a structural tension: regulation demands stronger controls, while encryption reduces the operational visibility needed to manage them safely.

Storage, Backup, and Recovery Trade-offs

Encrypting financial data at rest across hybrid and cloud environments is essential. But encryption decisions often overlook recovery dynamics.

Encrypted backups protect data integrity, yet they extend restore times. RTO and RPO impacts surface only during real incidents, when recovery speed matters most. These trade-offs are rarely modelled upfront, but they directly affect operational resilience.

Operational Impact of Encrypted Networks

Supervisors are increasingly focused on how systems behave under stress. Encryption is no longer just a security control; it is an operational risk factor.

Performance and Scalability as First-Order Risks

The real impact of encryption emerges in production. Under sustained and peak workloads, performance, scalability, and determinism are tested simultaneously.

Encryption-induced latency affects:

- Trading performance and price execution

- Payment processing reliability

- Customer experience during peak demand

For latency-sensitive workloads, determinism matters more than raw speed. Hardware-based IPsec operating at full line rate avoids jitter and tail latency. Offloading encryption from firewalls and SD-WAN platforms restores headroom for inspection, routing, and policy enforcement.

This aligns directly with regulatory scrutiny of trading stability, payments reliability, and systemic risk.

Threat Detection and Fraud in Encrypted Environments

Encrypted traffic reduces the fidelity of fraud detection and threat monitoring. Security teams rely more heavily on inference, metadata, and alerts rather than direct observation.

The consequence is slower detection and response. This delay is rarely attributed to encryption, yet it represents a real operational cost in financial environments where seconds matter.

Preparing for What Comes Next

Encryption challenges will intensify, not ease.

Post-Quantum Cryptography and Future Readiness

Post-quantum cryptography encryption introduces additional computational load across existing architectures. For financial services, this is not a distant concern.

Long retention periods for transactional and customer data mean cryptographic decisions made today must withstand future threats. PQC transitions risk amplifying existing performance and complexity problems if built on fragile architectures.

Crypto agility becomes essential. Platforms that support algorithm updates in place reduce the need for disruptive refresh cycles. In this context, agility is a compliance enabler, not a performance optimisation.

Risk Management and Audit Blind Spots

Encryption performance and visibility are rarely modelled as first-class risks. Audits focus on configuration: is encryption present, enabled, documented?

What is harder to evidence is:

- Deterministic performance under stress

- Operational resilience

- Effectiveness of detection and response

Measuring encryption of financial data by outcomes rather than configuration is becoming unavoidable.

Rethinking Encryption for Financial Services Networks

An architectural rethink is underway.

From Cryptographic Control to Network Capability

The real cost of encrypting financial data is paid continuously: in performance, visibility, and operational effort. These costs are structural, not accidental, and they increase as networks scale.

Treating encryption purely as a cryptographic requirement creates false confidence. Security posture looks strong, while operational fragility grows beneath the surface.

What a Different Approach Looks Like

Financial services networks require encryption that preserves determinism, observability, and operational simplicity.

Network-native encryption platforms are designed to deliver:

- Deterministic, line-rate performance

- Centralised control and visibility

- Crypto agility for post-quantum readiness

They demonstrate that strong encryption does not have to come at the expense of speed or resilience. When encryption is treated as a foundational network capability, security, compliance, and future readiness scale together.

Future-proof your encryption security today.

Discover how Sitehop delivers PQC-ready encryption for banking and financial services. Request a demo and see how encryption can protect financial data without becoming the bottleneck.

To find out more, email info@sitehop.com

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

QKD vs PQC: Selecting The Best Quantum Safe Solution

January 15, 2026 | Post Quantum Cryptography

Quantum computing is no longer a distant prospect; it is a fast-approaching disruptor to the cryptographic foundations of the internet. The same computational power that promises breakthroughs in science and artificial intelligence also threatens to render today’s encryption obsolete. As governments, enterprises and network operators prepare for this shift, two technologies have emerged as leading countermeasures: Quantum Key Distribution (QKD) and Post-Quantum Cryptography (PQC).

QKD harnesses the principles of quantum physics to distribute keys using photons, particles of light that change state when observed. In theory, this allows two parties to detect any eavesdropping attempt in real time. However, QKD depends on specialist optical hardware such as lasers, photon detectors and dedicated fibre links, making it complex and costly to deploy at scale.

PQC, by contrast, takes a different approach. It replaces traditional mathematical problems like factoring and elliptic curves with new, quantum-resistant ones, most notably lattice‑based. These can run efficiently on existing CPUs, FPGAs and ASICs, allowing organisations to harden current networks against future quantum attacks without overhauling their infrastructure.

In short, both QKD and PQC aim to future-proof data in motion, but they take very different paths. Understanding where each fits is essential to building a practical, quantum-safe roadmap today. Let’s take a look at both approaches.

What is Quantum Key Distribution (QKD)?

Quantum Key Distribution (QKD) is a method of securely exchanging encryption keys by using the fundamental laws of quantum physics. Instead of relying on mathematical assumptions, QKD encodes cryptographic keys into quantum states of light, typically individual photons, that travel along an optical fibre or free-space channel. Because measuring a quantum state inevitably disturbs it, any attempt to intercept the transmission introduces detectable anomalies. This means that the two communicating parties can verify whether a key exchange has been compromised before using the key to encrypt data.

The key idea behind QKD is that it provides tamper evidence by design. If an eavesdropper tries to intercept or measure the quantum signals, the disturbance increases the quantum bit error rate (QBER), alerting the system to a potential breach. When implemented correctly, the resulting key can be proven to be secret, independent of the computational power of any adversary, even a quantum computer.

In theory, QKD offers information-theoretic security, meaning its protection does not depend on the hardness of a mathematical problem but on the immutable principles of quantum mechanics. This makes it a compelling option for organisations requiring the highest levels of assurance in key exchange, particularly over short, controlled optical links where physical infrastructure can be tightly managed.

Benefits

Eavesdrop-detection by design

Measuring quantum states disturbs them, so an attacker shows up as a higher error rate (QBER). That gives you tamper evidence during key establishment.

Information-theoretic key secrecy (under the model)

With ideal devices and proper post-processing (error correction + privacy amplification), the generated key can be provably secret, independent of adversary compute power.

Good fit for controlled, short optical links

In metro/backbone segments with dark fibre and tight physical security, QKD can add a high-assurance layer for site-to-site keying.

Limitations:

Limited range and scalability

Photons in standard fibre are absorbed and scattered; only about 10 % of photons travel more than 50 km, and only 0.01 % travel past 200 km. Extending range requires ultra‑low‑loss fibre and specialised repeaters; experimental demonstrations have achieved longer distances but remain costly and not widely available.

Specialised hardware & cost

QKD needs dedicated lasers and photon detectors and often these requirements drive high capital expenditure and major infrastructure changes

No built‑in authentication

QKD alone does not prove the identity of the communicating parties; it must be combined with classical or post‑quantum cryptographic mechanisms to authenticate the source, otherwise a man‑in‑the‑middle attack is possible.

Deployment complexity & susceptibility

QKD channels are fragile; even inadvertent vibrations or deliberate tapping can cause the channel to abort and keys to be discarded.

Security in practice

Real‑world QKD systems have been plagued by implementation attacks such as side‑channel exploits and denial‑of‑service; moreover, QKD works reliably only over fibre or free‑space optics and is not feasible over typical wireless links.

Conclusions on QKD

In practice, QKD remains a niche technology. Its specialised optical hardware, high deployment cost and limited range make it suitable only for short, high-value connections where physical infrastructure can be tightly controlled. Typical use cases include financial trading networks, critical infrastructure control systems and defence communications, where the highest assurance justifies the expense. While QKD demonstrates the remarkable potential of quantum physics in cybersecurity, its practical adoption is restricted to specific, point-to-point scenarios rather than large-scale or cloud-based networks.

What is Post‑Quantum Cryptography (PQC)?

Post-Quantum Cryptography (PQC) refers to a new generation of cryptographic algorithms designed to remain secure even against powerful quantum computers. Instead of relying on the factoring or elliptic-curve problems that quantum algorithms can easily break, PQC uses mathematical puzzles that are believed to resist both classical and quantum attacks. These include lattice-based, hash-based, and code-based constructions. Because PQC runs on standard processors and hardware accelerators, it can be deployed through software updates or integrated into existing systems, providing a practical path to quantum-safe encryption across today’s global networks.

Benefits

Authenticates transmissions:

PQC algorithms can generate digital signatures or certificates that authenticate the sender, eliminating the need for separate authentication channels.

Standardisation & support:

ML‑KEM (key encapsulation), ML‑DSA (digital signatures) SLH‑DSA (hash‑based signatures) are the latest standards from NIST. National governments and agencies such as the NSA, the NCSC and the cybersecurity bodies of the British, French, German, Dutch, Swedish and Czech governments have all stated a clear choice of PQC over QKD.

Limitations

Large keys and computational overhead:

Some PQC schemes require much larger keys than today’s cryptosystems and can increase storage and processing overhead; resource‑constrained devices may need upgrades or hardware acceleration.

Algorithm maturity:

Several PQC candidates are still being evaluated, and some experimental schemes — such as the Supersingular Isogeny Key Encapsulation algorithm (SIKE) — have been broken by classical attacks.

Conclusions on PQC

Despite a few limitations such as larger key sizes and higher computational overhead, Post-Quantum Cryptography remains the only practical path to securing Internet-scale communications against quantum threats. Its algorithms can be deployed through software or hardware updates across existing infrastructure, making PQC the foundation for real-world quantum-safe encryption. As global standards mature and adoption accelerates, PQC enables organisations to protect data in motion today while remaining resilient to the quantum challenges of tomorrow.

QKD vs PQC: At a Glance

The table below summarises how QKD and PQC compare across critical categories.

Use cases: where each technology fits

Quantum Key Distribution (QKD) is best suited for:

- Securing site‑to‑site links: QKD can add quantum‑grade key distribution to encrypted tunnels between high‑value sites (e.g., data‑centre interconnects).

- Short backbone segments: It can protect short optical fibre links between cities or cloud regions, provided dedicated fibre is available.

- High‑value transactions and critical control links: Banks, utilities or defence networks may consider QKD to ensure real‑time commands cannot be tapped.

- Research & niche use: Governments and some national programmes (e.g., China and South Korea) are experimenting with QKD networks, but these are costly and limited.

Post‑Quantum Cryptography (PQC) applies broadly:

- Quantum‑safe IPsec, TLS and VPNs: PQC upgrades current key exchange and signature algorithms in Internet and private WAN traffic.

- Securing routers and network control planes: PQC can protect routing protocols and device firmware, enabling quantum‑resilient control and management traffic.

- Cloud and multi‑site data migration: It ensures encrypted transfers between cloud regions or providers remain safe even decades from now.

- Resilient SD‑WAN overlays and partner interconnects: PQC provides high‑speed, quantum‑resistant encryption across dynamic multi‑site networks.

- Edge and IoT deployments: Software‑based PQC can run on a wide range of devices; hardware‑accelerated platforms like Sitehop’s SAFEcore can deliver sub‑microsecond latency.

Conclusion & next steps

Post-Quantum Cryptography (PQC) provides the most practical and scalable route to quantum-safe security today. Its ability to integrate with existing networks, hardware and encryption standards makes it the clear choice for protecting data in motion across global infrastructures, including telecom backbones, data centres, critical industrial control systems and cloud environments.

While QKD offers unique advantages for tightly controlled, high-assurance optical links, PQC delivers the flexibility and performance required for Internet-scale protection.

If you are planning your quantum-safe migration strategy or want to understand how PQC can enhance your network architecture, contact Sitehop for expert advice and tailored solutions.

To find out more, discover our QKD vs PQC review here.

Or email info@sitehop.com

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

The boardroom gap: why quantum risk is becoming a governance problem in Financial Services.

January 13, 2026 | Post Quantum Cryptography

Most boards are already stretched.

AI is reshaping operating models. Ransomware is routine. Regulators are asking tougher questions about resilience, third-party exposure and systemic risk. Against that backdrop, it is tempting to treat quantum security as something to worry about later.

That assumption is becoming dangerous.

Quantum risk does not behave like a future problem. It behaves like a slow-burn governance issue.

This is not about physics. It is about longevity.

When boards hear the word quantum, they often think academic research or experimental technology. Something abstract and comfortably distant from enterprise risk. That framing is wrong.

Post-quantum cryptography is not about quantum computers suddenly breaking everything overnight. It is about whether the data being protected today will still be protected when it still matters.

Financial institutions hold data that must remain confidential for decades. Client and transaction records. Trading strategies. Proprietary models. Long-term contracts. Adversaries understand this. That is why the threat model has already shifted to ‘steal now, decrypt later’.

Data is being harvested today on the assumption that future computing power will make current encryption obsolete. From a governance perspective, the question is no longer when quantum arrives. It is whether today’s controls will still hold in the long term.

A familiar pattern for Financial Services

This pattern is well known in banking and insurance.

Technical teams see emerging risks early. They understand the mechanics and the timelines. Boards tend to engage later, often when regulators, auditors or peers start asking uncomfortable questions.

We have seen this before. With cloud adoption. With ransomware. With artificial intelligence.

Quantum is following the same curve, with one crucial difference. By the time the risk becomes obvious at board level, the ability to respond cleanly may already be gone.

You cannot rotate decades of cryptography overnight. You cannot easily replace certificates embedded across complex estates. And you cannot do either calmly once regulatory scrutiny has begun.

Regulation is already moving

Quantum risk is no longer hypothetical because regulation is no longer neutral.

Frameworks such as DORA, NIS2 and PCI DSS 4 may not always name post-quantum cryptography explicitly, but the direction of travel is clear. Regulators are signalling expectations around long-term confidentiality, crypto-agility and preparedness for next-generation threats.

For regulated institutions, waiting for certainty is not a strategy. It is a postponement.

The real gap is governance, not capability

In most organisations, this is not a technical blind spot.

Security teams are already assessing exposure. Architects know where cryptography lives. CISOs understand the risks around certificate sprawl and algorithm longevity.

The problem is how quantum risk is framed at board level. It is often treated as a technical footnote rather than a business risk.

That creates a governance gap:

No clear ownership. No agreed time horizon. No decision point for when ‘not urgent’ becomes ‘too late’.

Because nothing breaks immediately, the risk slips quietly down the agenda. Until it does not.

We have solved problems like this before

Boards do not need to understand quantum in technical depth. But they do need to own it. That ownership starts with practical questions:

- Which data must remain secure for the next 20 or 30 years?

- Where does encryption sit across the estate?

- How quickly could the organisation adapt if standards change?

For many institutions, answering those questions reveals something uncomfortable. Cryptography is often deeply embedded, poorly inventoried and hard to change quickly.

This is where early, pragmatic intervention matters. Introducing crypto-agility, particularly at the network layer, creates optionality. It buys time. It reduces disruption.

It is not about betting on one algorithm or one future standard. It is about ensuring the organisation can move when it needs to.

A quiet test of stewardship

For boards, quantum risk is ultimately about stewardship.

Protecting value over time. Avoiding avoidable disruption. Staying ahead of regulatory expectation rather than reacting under pressure.

Quantum risk may not be loud yet. But it is persistent.

And like most governance failures, it only becomes obvious once ignoring it becomes far more expensive than acting early.

To find out more, email info@sitehop.com

Or call us: +44 (0)114 478 2366

Sitehop.

Engineered for speed. Built for the future.

5 Best Post Quantum Encryption Solutions for Telecoms & 5G Networks

October 21, 2025 | Post Quantum Cryptography, Telco

The telecom and 5G networking landscape demands solutions that can keep pace with increasing data rates, operational efficiency, and emerging cybersecurity threats such as quantum computing. Traditional encryption methods, while foundational, impose significant latency and complexity, and fail to meet modern performance and futureproofing requirements.

Post-quantum cryptography (PQC) is emerging as the critical safeguard, enabling carriers to secure data in motion against both today’s attacks and tomorrow’s quantum breakthroughs. A new generation of solutions, from hardware-accelerated platforms like Sitehop’s through to other flexible software-defined approaches, are reshaping how operators think about latency, scalability, and resilience.

This article explores the leading post-quantum encryption technologies that will define the secure future of telecom and 5G infrastructure.

Why telcos/5G providers need quantum‑safe encryption now

5G networks rely heavily on public‑key cryptography for device authentication and key exchange mechanisms for encryption. This cryptography (RSA and elliptic‑curve schemes) depends on mathematical problems that are hard for classical computers to solve but could be solved quickly by a quantum computer. Experts warn that such a cryptographically relevant quantum computer (CRQC) could arrive within the decade nist.gov, yet updating cryptography across modern networks typically takes 10–20 years (nist.gov).

Unlike the Y2K bug, which had a fixed date, the arrival of quantum computers is uncertain, and the threat may materialise before many systems have been upgraded. To make matters worse, adversaries are already collecting encrypted data in the hope of decrypting it later with quantum machines – a tactic known as “harvest now, decrypt later” nist.gov.

Nation‑states are believed to be stockpiling sensitive encrypted traffic techtarget.com, so critical data exchanged on 5G networks could be compromised years down the line if providers do not start adopting post‑quantum cryptography (PQC) soon.

How we compared the top solutions

We have compared the top solutions using a range of evaluation criteria including latency, throughput, tunnel capacity, standards support (RFC 8784, 9242, 9370), crypto‑agility, integration with existing routing hardware and post quantum readiness.

Across high-speed enterprise platforms, hardware acceleration is common, vendors use ASICs, NPUs, or FPGAs to offload cryptography. The key distinction is data-path placement (where packets land first). In FPGA-first encryptors, frames enter the hardware pipeline directly, so the latency sensitive bulk crypto executes entirely in silicon with minimal queuing, delivering deterministic ultra-low latency/jitter and very low CPU load. In feature-first security gateways, even with powerful crypto ASICs, packets typically traverse classification, policy/session handling, and service frameworks before/around the IPsec engine (with controlled CPU assist for complex cases), yielding rich L4–L7 capabilities, application ID, IDS/IPS, SD-WAN, service chaining, with modestly higher and more variable latency than a pure hardware pipeline. Both approaches are valid: the former fits high-fan-in backhaul and line-rate encryption, while the latter excels at edge and service layers where policy and application context matter.

The best post‑quantum solutions for telecoms

Sitehop SAFEcore 1000: Benchmark for deterministic Post Quantum Encryption

- Positioning: FPGA‑powered IPsec aggregator offering sub‑microsecond latency, 8,000 tunnels and 200 Gb/s full duplex (per 1U) and optional ML‑KEM + RFC 9370 support.

- Key advantages: Deterministic latency under load; crypto‑agile updates; compact 1U form factor; ideal for high‑fan‑in IPsec aggregation.

- Deployment: Offload encryption in the core/backhaul while using existing gateways/NGFWs for policy and application control.

Fortinet FortiGate (FortiOS 7.6+): Flexible NGFW with PQC & QKD

- Positioning: Widely deployed NGFW/SD‑WAN platform with built‑in quantum‑safe features.

- Key features: IPsec key exchange now supports NIST‑approved ML‑KEM‑512/768/1024 docs.fortinet.com; FortiOS allows stacking multiple KEMs to create hybrid keys and includes UI/CLI controls for additional key exchanges docs.fortinet.com.

- QKD readiness: Fortinet introduced QKD integration starting with FortiOS 7.4; the platform works with leading QKD vendors to provide quantum‑generated keys thefastmode.com.

- Use case: Good for edge/regional deployments needing policy inspection and multiple PQC on‑ramps (e.g., RFC 8784 mixing, ML‑KEM hybrid).

Palo Alto Networks PAN‑OS 11.2: Multi‑KEM IKEv2 and NGFW features

- Positioning: NGFW with advanced VPN controls enabling hybrid key exchange.

- Key features: Uses RFC 9242 and RFC 9370 to perform multiple successive key exchanges; by combining classical (EC)DH with one or more post‑quantum KEMs, the shared key remains secure if any algorithm holds.

- Flexibility: Administrators can specify up to seven additional KEMs and optionally mix in RFC 8784 pre‑shared keys; ideal for phased migration.

- Considerations: Provides deep policy and threat‑inspection capabilities but may introduce higher latency compared with purpose‑built hardware accelerators.

Juniper SRX/vSRX (Junos 22.4R1+): QKD integration & quantum‑safe IPsec

- Positioning: Carrier‑class firewall platform with IPsec, MACsec and QKD capabilities.

- Quantum key manager: Junos Key Manager supports quantum key manager profiles; these profiles access QKD devices to generate fresh quantum keys for each connection and use them as post‑quantum pre‑shared keys.

PPK mixing & QKD: Static key profiles can be used to inject post‑quantum pre‑shared keys (RFC 8784), while dynamic profiles fetch keys from QKD devices; QKD uses quantum channels to generate identical keys and protect both data and control planes. - Real‑world validation: A 2025 proof‑of‑concept with Turkcell, Juniper and ID Quantique demonstrated that integrating QKD with Juniper’s MACsec/IPsec frameworks protected mobile backhaul without performance loss.

- Use case: Suitable for operators seeking QKD‑ready solutions and strong service‑chain functions (firewall, NAT, QoS) alongside PQC.